Twelve-year-old Leo sits at a scarred oak desk, his thumb tracing the jagged edge of a chipped laminate surface. His assignment is a standard middle-school rite of passage: an essay on the industrial revolution. Twenty years ago, Leo would have been surrounded by a fortress of library books, their spines cracked and smelling of vanilla and decay. Ten years ago, he would have had sixteen browser tabs open, flickering with the blue light of Wikipedia and various educational blogs.

Today, Leo has a ghost in the room.

The cursor blinks on his screen, a rhythmic, taunting heartbeat. He isn’t typing. He is staring at a small text box at the bottom of his screen—a digital mouth waiting to be fed. He knows that if he whispers the right incantation, the ghost will exhale a three-page essay on steam engines and textile mills. It will be grammatically perfect. It will be structurally sound. And it will contain absolutely none of Leo.

This is the quiet crisis unfolding in classrooms from Seattle to Seoul. We are currently locked in a heated, often pedantic debate about whether schools should "allow" Artificial Intelligence. It is the wrong question. It is like debating whether schools should allow oxygen or gravity. The ghost is already in the room. The real question is whether we are going to teach Leo how to speak to it, or if we are going to let it speak for him until his own voice withers away.

The Myth of the Shortcut

The instinct of the modern educator is often one of protection. We see the raw power of Large Language Models (LLMs) and we see a threat to the fundamental mechanics of learning. If a machine can solve a quadratic equation or summarize To Kill a Mockingbird, why would a student ever bother to struggle?

Struggle is the point.

When we learn, our brains are physically reconfiguring themselves. We are building "myelin," a fatty substance that wraps around our neurons, making signals travel faster and more efficiently. This process requires friction. It requires the frustration of a sentence that won't sit right and a math problem that refuses to resolve. The fear is that AI provides a "frictionless" education, a smooth slide toward a degree that leaves the brain as soft and unformed as it was on day one.

But consider Sarah, a high school junior with severe dyslexia. For Sarah, the "friction" of a standard writing assignment isn't a productive struggle; it is a brick wall. When she uses an AI as a scaffolding tool—not to write her paper, but to help her organize the chaotic storm of ideas in her head into a coherent outline—the wall becomes a staircase.

For Sarah, AI isn't a cheat code. It's a prosthetic for the mind. If we ban the tool in the name of "academic integrity," we aren't protecting her education. We are gatekeeping it.

The Invisible Literacy

We used to teach children how to use a library. We taught them the Dewey Decimal System, how to navigate an index, and how to tell the difference between a primary source and a biased editorial. These were the survival skills of the 20th century.

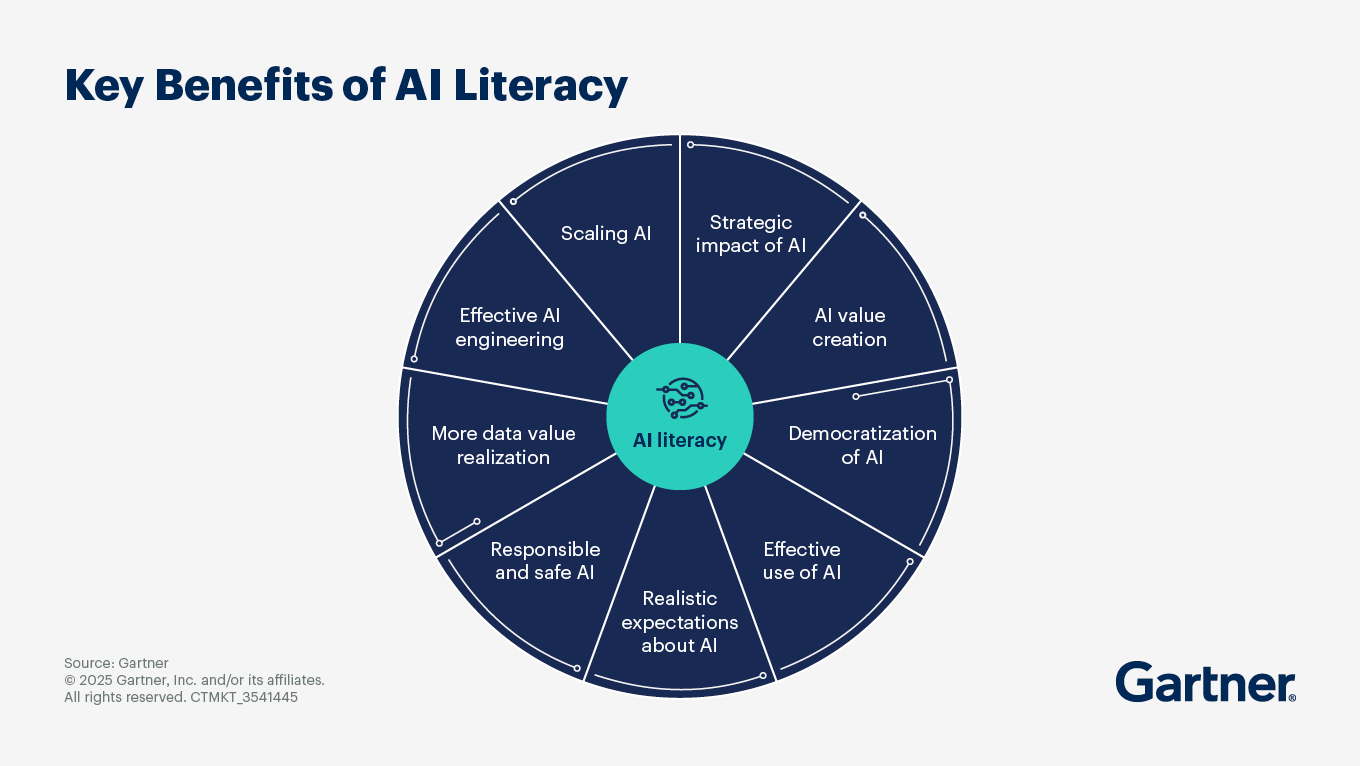

Now, we are entering the era of "Prompt Engineering," though that term is far too clinical for what it actually is. It is a new form of literacy. It is the ability to communicate with an alien intelligence that has read everything ever written but understands none of it.

If we don't teach this in schools, we are creating a new class divide. The children of the wealthy will learn to use AI as a high-powered research assistant, a coding partner, and a sophisticated editor at home or in private enrichment programs. The children in underfunded public schools, where the technology is simply banned or blocked by firewalls, will enter the workforce like people trying to compete with a tractor using a hand-plow.

To be "AI literate" is to understand the "Hallucination." This is the industry term for when an AI simply makes things up with the confidence of a seasoned con artist. Because these models are essentially hyper-advanced autocomplete systems, they don't check facts; they predict the next likely word. If you ask an AI for a biography of a minor historical figure, it might invent a prestigious award they never won, simply because "won the Nobel Prize" is a high-probability string of words to follow "distinguished physicist."

If we don't teach Leo how to spot the lie, he becomes a puppet of the ghost. He becomes a consumer of truth rather than a creator of it.

The Death of the Five-Paragraph Essay

For decades, the American education system has been obsessed with the five-paragraph essay. Intro, three supporting points, conclusion. It is a rigid, mechanical format designed to be easy to grade.

The problem? AI is better at the five-paragraph essay than any human will ever be. It is the perfect medium for a machine. It is formulaic, predictable, and devoid of soul.

By clinging to these outdated modes of assessment, schools are practically begging students to use AI. We are testing them on their ability to act like machines, and then acting surprised when they use a machine to pass the test.

The shift must be toward "process over product." Instead of grading the final PDF, teachers are beginning to grade the "chat log." They want to see how the student interrogated the AI. Did they challenge its assumptions? Did they ask it to provide counter-arguments? Did they use it to find a flaw in their own logic?

This turns the classroom into a laboratory rather than an assembly line. It forces the student back into the driver's seat. It turns the ghost into a sounding board.

The Existential Weight

There is a deeper, more shadow-filled corner of this conversation. It is the fear that if the machines can do the "thinking," what is left for us?

I remember the first time I saw an AI-generated poem that actually made me feel something. It was a cold, hollow sensation in the pit of my stomach. If a series of weights and biases in a server farm in Oregon can replicate the specific ache of human longing, then what am I?

Our children are feeling this, too. They see the headlines. They see the "Generative AI" tools creating art, music, and code. They are asking, "Why should I learn to code if the computer can do it? Why should I learn to draw?"

If we don't address this in schools, we are leaving them to face a profound existential crisis alone. We have to teach them that AI is a mirror, not a person. It can reflect our brilliance and our biases, but it cannot want. It has no skin in the game. It doesn't know the salt of a tear or the heat of a first kiss. It has data; we have life.

The New Architecture of the Classroom

Imagine a classroom where the "test" isn't a multiple-choice sheet, but a project where students must collaborate with an AI to solve a local community problem.

One group might be tasked with redesigning the city's bus routes to reduce carbon emissions. They use the AI to process vast amounts of traffic data and suggest optimizations. But then—and this is the crucial part—the students must go out into the world. They must interview the people who actually ride those buses. They must realize that the "optimized" route the AI suggested skips a senior center because it’s "inefficient" according to the algorithm.

The students then have to override the AI. They have to inject human empathy back into the math.

This is the education we owe them. We shouldn't be teaching them to compete with AI; we should be teaching them to lead it. We should be teaching them that the machine is a magnificent, lightning-fast idiot that needs a human heart to give it direction.

The stakes are not merely academic. They are societal. If we raise a generation that doesn't understand how these algorithms work, we are raising a generation of digital serfs. We are handing the keys of our culture, our politics, and our very reality to a handful of corporations that own the "ghosts."

The Ghost in the Oak Desk

Back at the oak desk, Leo finally types something.

He doesn't ask the ghost to write the essay. Instead, he types: "I'm writing about the Industrial Revolution. I want to focus on the perspective of a ten-year-old girl working in a coal mine. Can you give me five primary source accounts of what the air smelled like in those mines?"

The ghost obliges. It pulls from a vast digital library, offering descriptions of "choke-damp," the scent of wet slate, and the sulfurous tang of gunpowder.

Leo reads. He winces. He imagines the soot under his own fingernails. Then, he closes the laptop.

He picks up a pencil—a physical, yellow, graphite-filled stick of wood. He feels the weight of it. He feels the resistance of the paper. And then, he begins to write.

"The air in the mine didn't just smell like smoke; it tasted like the end of the world..."

The ghost provided the bricks, but Leo is the architect. The pencil moves, fueled by a brain that is struggling, growing, and stubbornly, beautifully human.

We don't need to protect our children from the future. We need to give them the tools to build it, ensuring that when the ghost speaks, it is always in service of the person holding the pencil.

The ghost is here. It’s time we taught the children how to haunt it back.